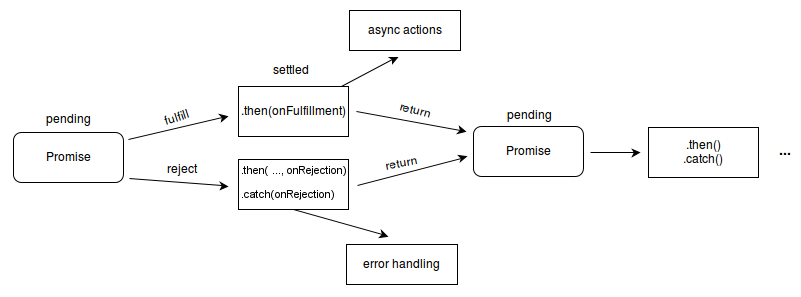

Part of Processing is to check the page for links to other pages and files needed to build the page.

Google does not navigate from page to page as a user would. I’m going to cover a few of these that are relevant to JavaScript. There are a lot of systems obfuscated by the term “Processing” in the image. HTML pages, Javascript files, CSS, XHR requests, API endpoints, and more. It’s also important to note that while Google states the output of the crawling process as “HTML” on the image above, in reality, they’re crawling and storing all resources needed to build the page.

YOU DONT KNOW JS ПО РУССКИ HOW TO

I’ll cover how to test this in the section about the Renderer because there are some key differences between the downloaded GET request, the rendered page, and even the testing tools. They show you what Google sees and are useful for checking if Google may be blocked and if they can see the content on the page. This is why Google tools such as the URL Inspection Tool inside Google Search Console, the Mobile-Friendly Test, and the Rich Results Test are important for troubleshooting JavaScript SEO issues. Especially with JavaScript sites, Google may be seeing something different than a user. Some sites may also use user-agent detection to show content to a specific crawler. I mention this because some sites will block or treat visitors from a specific country or using a particular IP in different ways, which could cause your content not to be seen by Googlebot. The requests mostly come from Mountain View, CA, USA, but they also do some crawling for locale-adaptive pages outside of the United States. When you run this for a URL, check the Coverage information for “Crawled as,” and it should tell you whether you’re still on desktop indexing or mobile-first indexing. You can check to see how Google is crawling your site with the URL Inspection Tool inside Search Console. The request is likely to come from a mobile user-agent since Google is mostly on mobile-first indexing now. The server responds with headers and the contents of the file, which then gets saved. The crawler sends GET requests to the server. Google has provided a simplistic diagram to cover how this process works. The system that handles the rendering process at Google is called the Web Rendering Service (WRS). Thanks to the rise of JavaScript, search engines now need to render many pages as a browser would so they can see content how a user sees it.

In the early days of search engines, a downloaded HTML response was enough to see the content of most pages. How Google processes pages with JavaScript

Learn from JS devs & share SEO knowledge with them. The web has moved from plain HTML - as an SEO you can embrace that. The reality of the current web is that JavaScript is ubiquitous. Some use it for menus, pulling in products or prices, grabbing content from multiple sources, or in some cases, for everything on the site. Most websites use some kind of JavaScript to add interactivity and to improve user experience. Did you know that while Ahrefs blog is powered by WordPress, much of the rest of the site is powered by JavaScript like React?